Why Service Account Management is Critical.

Loram 5 Tablet is a combination medicine used to treat...

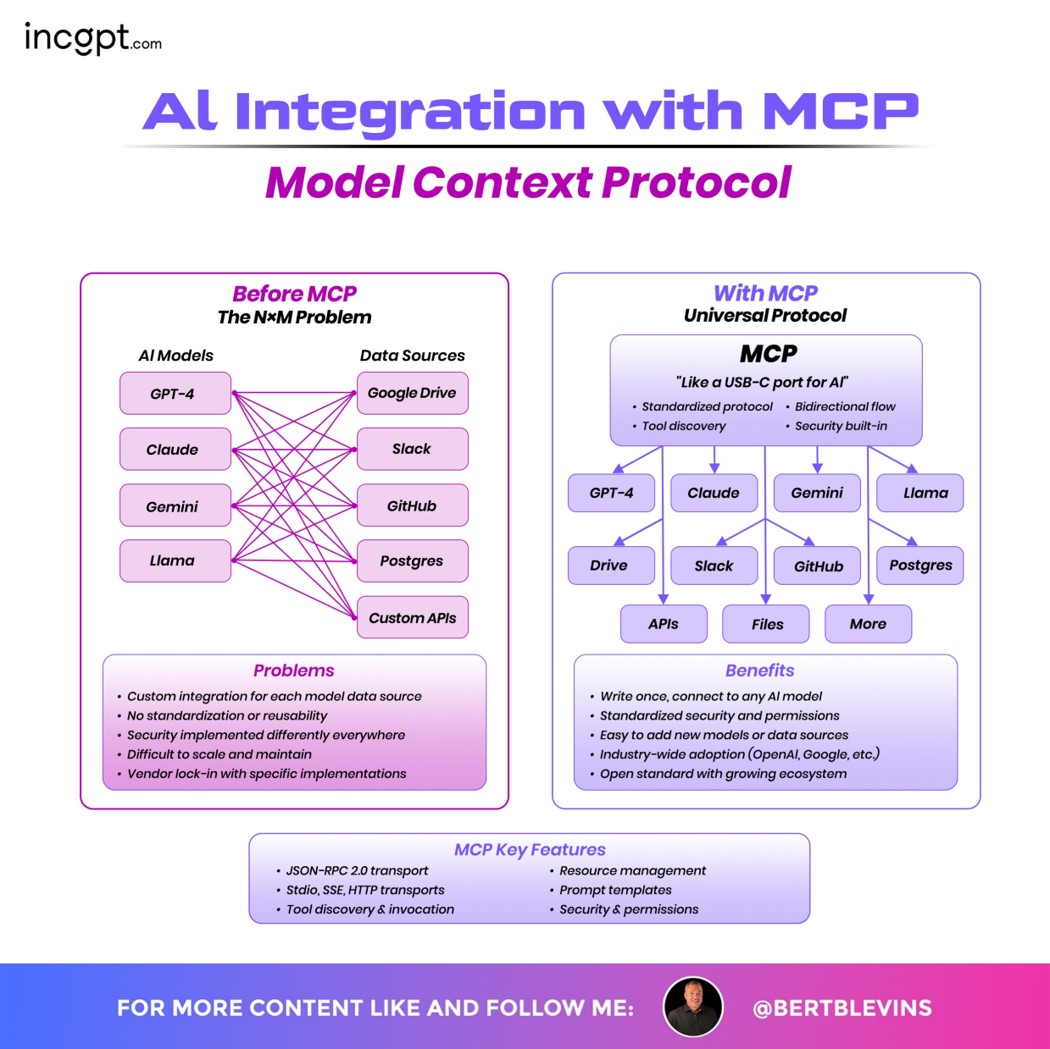

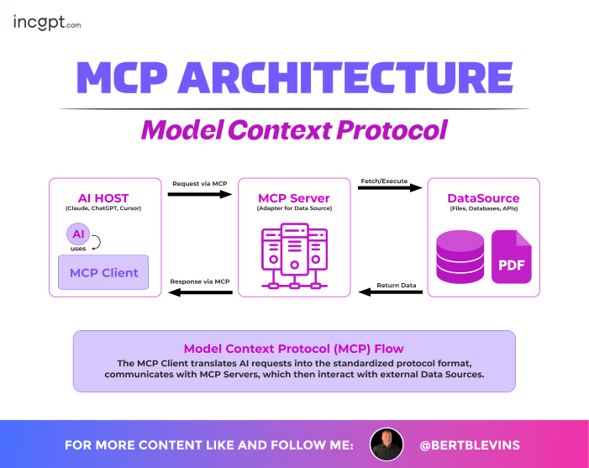

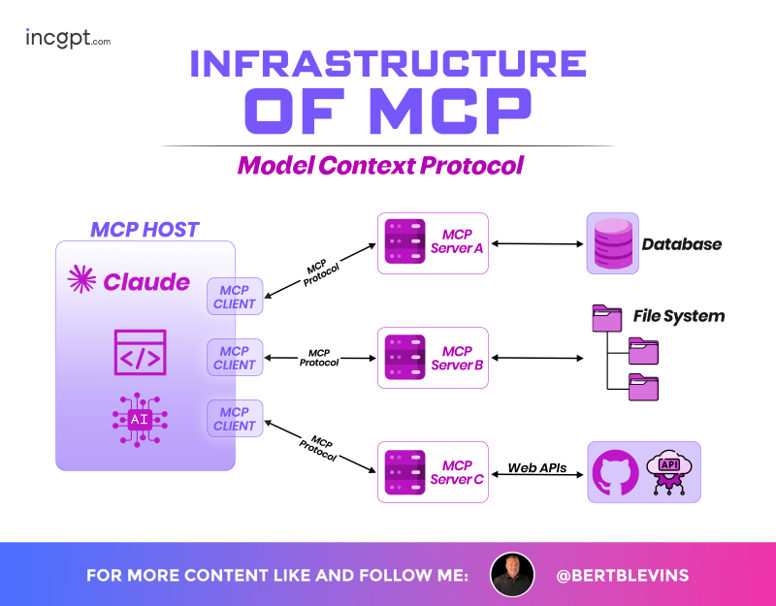

Model Context Protocol (MCP) is a standardized way for AI models to securely interact with tools, data, and services. It simplifies integration, improves scalability, and enables safer AI workflows.

Lorem ipsum dolor sit amet,

Lorem ipsum dolor sit amet,

Lorem ipsum dolor sit amet,

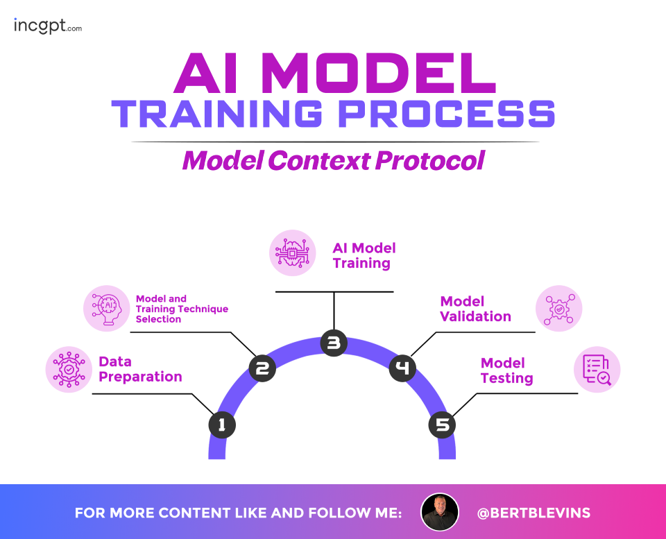

The AI Model Training Process within the Model Context Protocol presents a five-step workflow:

Each step is visually connected in a circular flow, emphasizing the iterative nature of the process, with icons representing key activities like data handling, technique selection, training execution, validation checks, and testing evaluations.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore

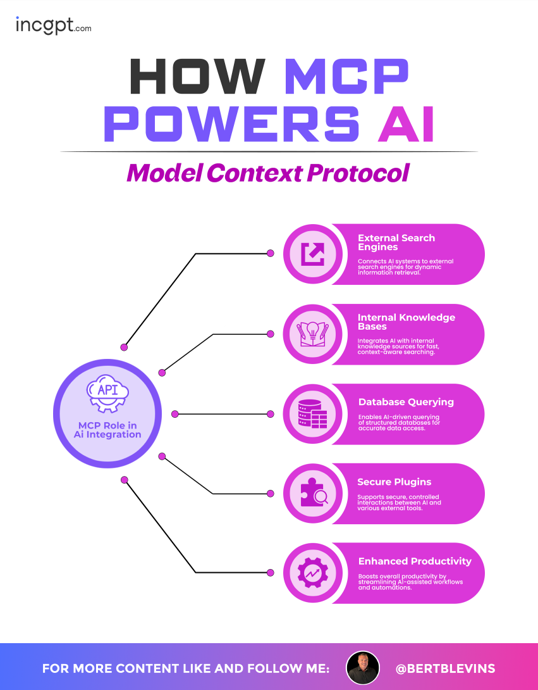

The Model Context Protocol (MCP) powers AI through its role in AI integration, with an API-centric hub connecting to five key areas: External Search Engines for dynamic information retrieval, Internal Knowledge Bases for context-aware searching, Database Querying for accurate data access, Secure Plugins for controlled interactions with external tools, and Enhanced Productivity for boosting AI-assisted workflows and automations.

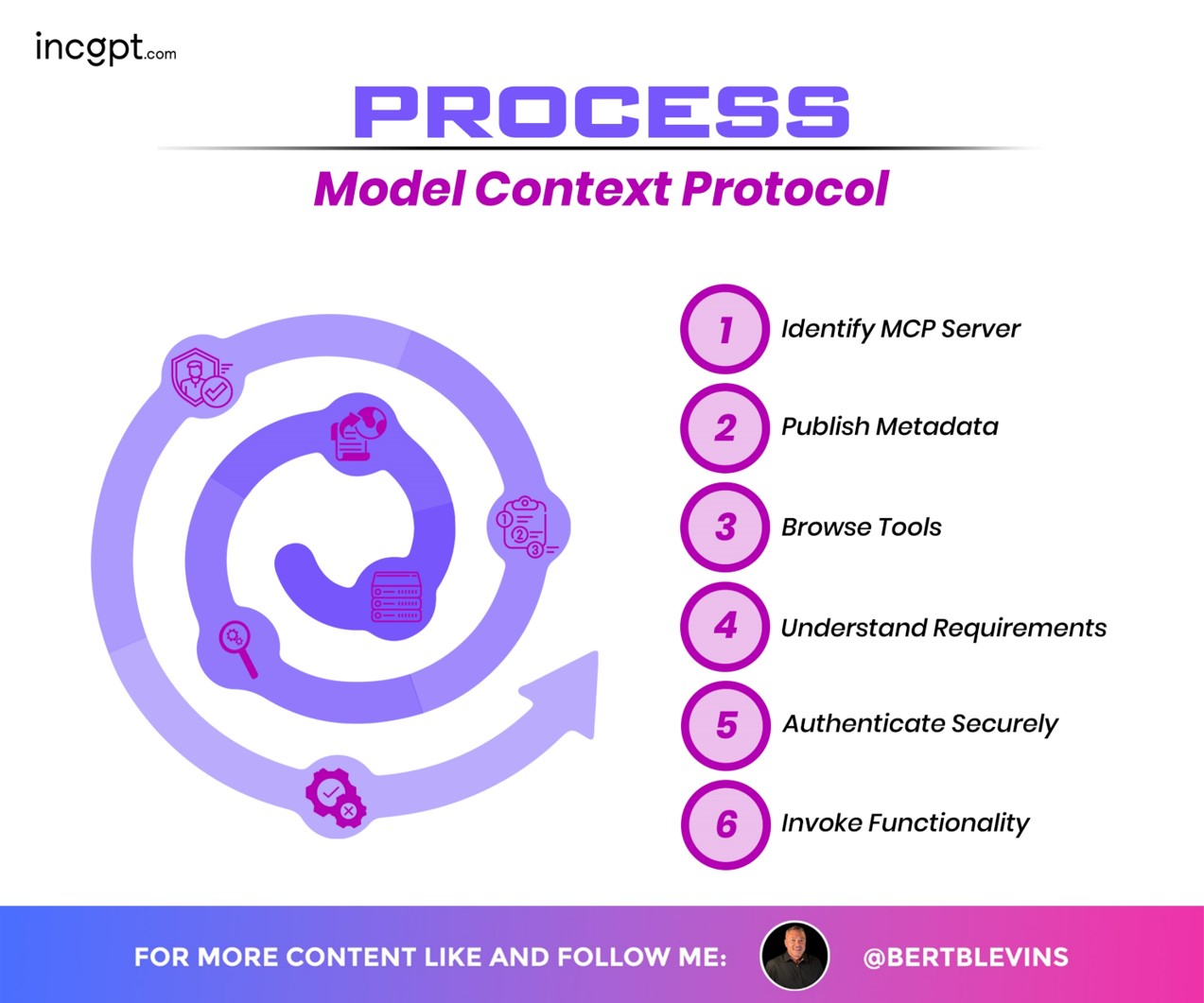

The Model Context Protocol (MCP) process is outlined through a six-step workflow depicted in a spiral design: 1) Identify MCP Server, 2) Publish Metadata, 3) Browse Tools, 4) Understand Requirements, 5) Authenticate Securely, and 6) Invoke Functionality. Each step is visually represented with icons, guiding users through the process of engaging with MCP servers, from server identification to executing desired functions.

The Model Context Protocol (MCP) integration compares the pre-MCP “NxM Problem,” where AI models (GPT-4, Claude, Gemini, Llama) connect to data sources (Google Drive, Slack, GitHub, Postgres, Custom APIs) with complex, non-standardized integrations, to the streamlined “Universal Protocol” approach with MCP. MCP, likened to a “USB-C port for AI,” offers a standardized protocol, bidirectional flow, tool discovery, and built-in security, connecting models to diverse data sources like APIs, files, and more with ease. Benefits include standardized security and permissions, easy addition of new models or data sources, industry-wide adoption (e.g., OpenAI, Google), and an open standard with a growing ecosystem, supported by key features like JSON-RPC 2.0 transport, SSE/HTTP transports, tool discovery and invocation, resource management, and security with permissions.

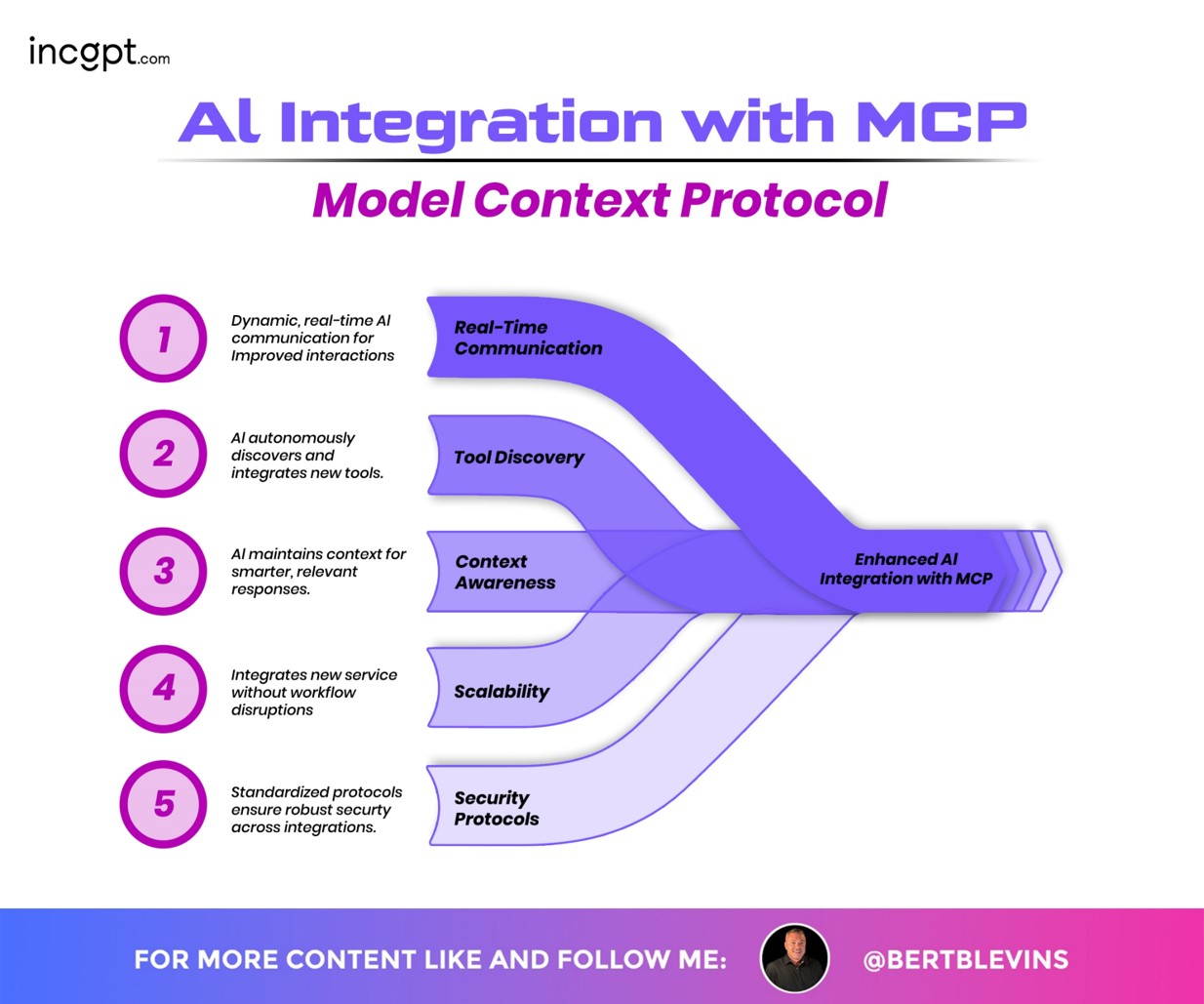

The infographic highlights how AI integration with the Model Context Protocol (MCP) enhances AI capabilities through five key aspects:

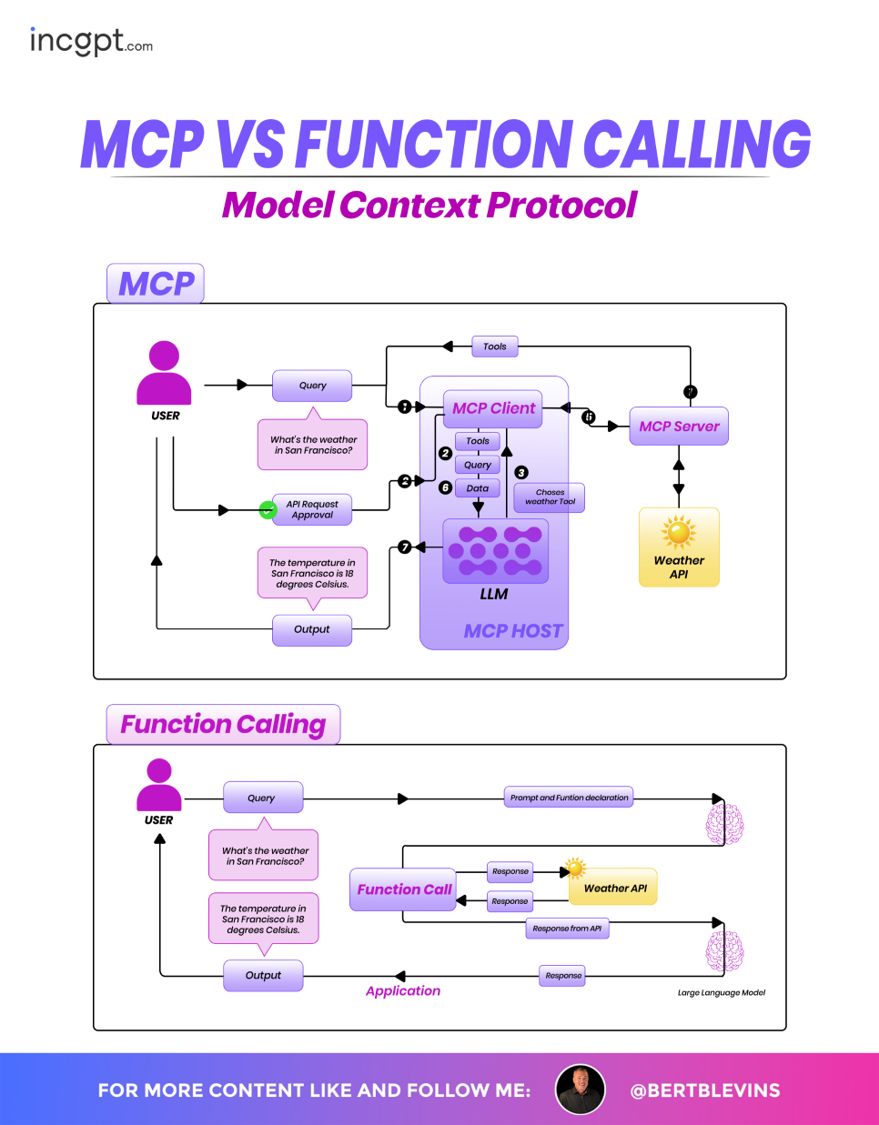

The Model Context Protocol (MCP) and Function Calling approaches for processing a user query about the weather in San Francisco are compared. In the MCP process, the user query is sent to an MCP Client within an MCP HOST, which, after API request approval, chooses a weather tool via the MCPServer, queries the Weather API, and outputs the result (18 degrees Celsius) through an LLM. In contrast, Function Calling involves the user query being processed by a Function Call application, which uses a large language model to interpret the prompt and function declaration, queries the Weather API directly, and returns the same result.

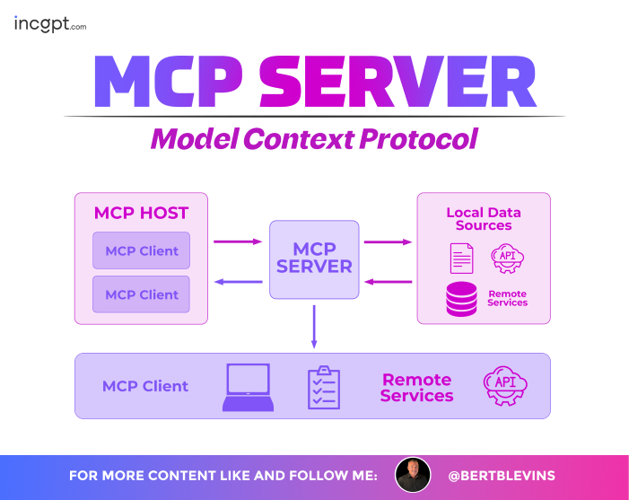

The MCP Server architecture within the Model Context Protocol showcases how MCP Clients from an MCP HOST interact with the central MCP Server to access both Local Data Sources (e.g., files, APIs, remote services) and Remote Services via APIs. The design highlights a streamlined connection between multiple MCP Clients and diverse data environments, emphasizing the server’s role as a hub for efficient data exchange.

The Model Context Protocol (MCP) ecosystem details its key components and their interactions within a unified framework. It highlights the MCP Client, which connects AI hosts like Claude and ChatGPT to the MCP Server, facilitating data retrieval from diverse sources such as files, databases, and APIs. The design emphasizes the protocol’s role in standardizing communication, with a focus on tool discovery, resource management, and secure data exchange.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ornare arcu odio ut sem

Design Agency Owner

" volutpat diam ut venenatis. Convallis aenean et tortor at risus. Nec nam aliquam sem et tortor. Donec massa sapien faucibus et molestie ac feugiat. Lacinia quis "

Web Developer

"Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat."

Freelancer

"Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat."

Design Agency Owner

" volutpat diam ut venenatis. Convallis aenean et tortor at risus. Nec nam aliquam sem et tortor. Donec massa sapien faucibus et molestie ac feugiat. Lacinia quis "

Ecommers Owner

" volutpat diam ut venenatis. Convallis aenean et tortor at risus. Nec nam aliquam sem et tortor. Donec massa sapien faucibus et molestie ac feugiat. Lacinia quis "

Freelancer

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ornare arcu odio ut sem

Loram 5 Tablet is a combination medicine used to treat...

Loram 5 Tablet is a combination medicine used to treat...

Loram 5 Tablet is a combination medicine used to treat...

" volutpat diam ut venenatis. Convallis aenean et tortor at risus. Nec nam aliquam sem et tortor. Donec massa sapien faucibus et molestie ac feugiat. Lacinia quis "